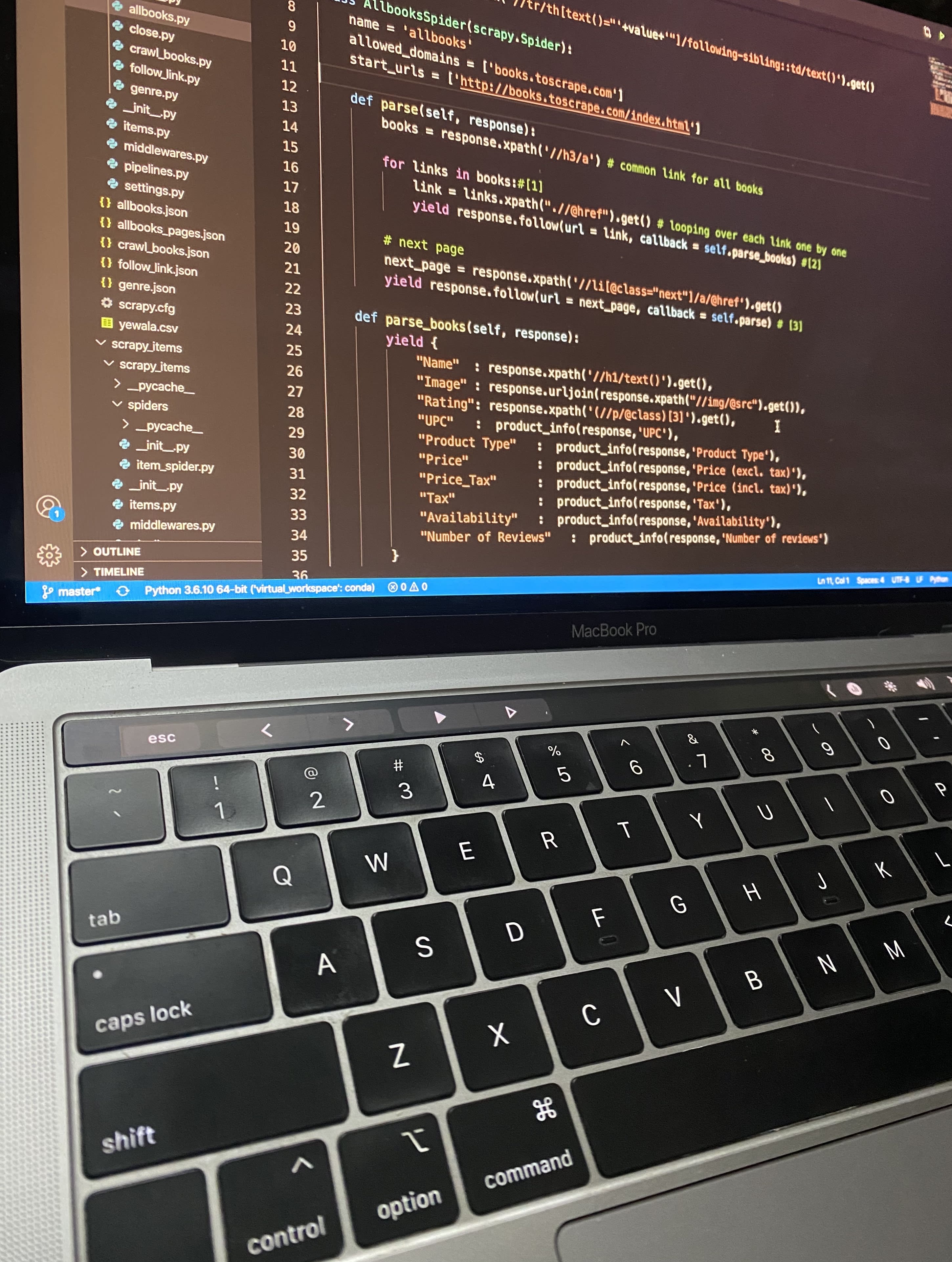

Feb 23, 2021 Web scraping, in simple terms, is the act of extracting data from websites. It can either be a manual process or an automated one. However, extracting data manually from web pages can be a tedious and redundant process, which justifies an entire ecosystem of multiple tools and libraries built for automating the data-extraction process.

- Oct 27, 2019 Web scraping is a process where the data is present in the Html format and we filter out the required data and persist it. The above is a general definition. The more precise one that I think is t.

- In this video we will take a look at the Node.js library, Cheerio which is a jQuery like tool for the server used in web scraping. This is similar to the pyt.

In a perfect world, every website provides free access to data with an easy-to-use API… but the world is far from perfect. However, it is possible to use web scraping techniques to manually extract data from websites by brute force. The following lesson examines two different types of web scrapers and implements them with NodeJS and Firebase Cloud Functions.

Frontend Integrations

This lesson is integrated with multiple frontend frameworks. Choose your favorite flavor 🍧.

Web Scraping Reactions

Initial Setup

Let’s start by initializing Firebase Cloud Functions with JavaScript.

Strategy A - Basic HTTP Request

The first strategy makes an HTTP request to a URL and expects an HTML document string as the response. Retrieving the HTML is easy, but there are no browser APIs in NodeJS, so we need a tool like cheerio to process DOM elements and find the necessary metatags. Touch monitor for mac.

The advantage 👍 of this approach is that it is fast and simple, but the disadvantage 👎 is that it will not execute JavaScript and/or wait for dynamically rendered content on the client.

Link Preview Function

💡 It is not possible to generate link previews entirely from the frontend due to Cross-Site Scripting vulnerabilities.

An excellent use-case for this strategy is a link preview service that shows the name, description, and image of a 3rd party website when a URL posted into an app. For example, when you post a link into an app like Twitter, Facebook, or Slack, it renders out a nice looking preview.

Link previews are made possible by scraping the meta tags from <head> of an HTML page. The code requests a URL, then looks for Twitter and OpenGraph metatags in the response body. Several supporting libraries are used to make the code more reliable and simple.

- cheerio is a NodeJS implementation of jQuery.

- node-fetch is a NodeJS implementation of the browser Fetch API.

- get-urls is a utility for extracting URLs from text.

Let’s start by building a

HTTP Function

You can use the scraper in an HTTP Cloud Function.

Web Scraping Tools

At this point, you should receive a response by opening http://localhost:5000/YOUR-PROJECT/REGION/scraper

Strategy B - Puppeteer for Full Browser Rendering

Web Scraping Reactor

What if you want to scrape a single page JavaScript app, like Angular or React? Or maybe you want to click buttons and/or log into an account before scraping? These tasks require a fully emulated browser environment that can parse JS and handle events.

Downloader video for mac free. Puppeteer is a tool built on top of headless chrome, which allows you to run the Chrome browser on the server. In other words, you can fully interact with a website before extracting the data you need.

Web Scraping React Native

Instagram Scraper

Instagram on the web uses React, which means we won’t see any dynamic content util the page is fully loaded. Puppeteer is available in the Clould Functions runtime, allowing you to spin up a chrome browser on your server. It will render JavaScript and handle events just like the browser you’re using right now.

First, the function logs into a real instagram account. The page.type method will find the cooresponding DOM element and type characters into it. Once logged in, we navigate to a specific username and wait for the img tags to render on the screen, then scrape the src attribute from them.